How Explainpaper used Replica to predict A/B test outcomes before launch

Explainpaper, a research platform with 400,000+ users, used Replica to simulate an A/B test on its signup funnel — predicting both primary funnel metrics correctly in under an hour, before the live test concluded.

Explainpaper's Challenge

Explainpaper is a leading research platform that helps readers understand complex academic papers. With over 400,000 users worldwide, the company set out to improve its new user signup funnel on their website – a critical step for growth.

Like many experiment-heavy companies, Explainpaper faced a familiar challenge: deciding which A/B tests to run. Each live test consumes limited user traffic, requires weeks of data collection, and delays dozens of other A/B tests waiting in the queue. The stakes are high – a bad test can distort overlapping experiments and hurt user experience or revenue.

This set the stage for exploring whether A/B test outcomes could be predicted before launch – a challenge that Replica was built to solve.

The worst part of having lots of ideas is that they're often bad. Before, I had to test the bad ideas on real users and take the loss. Thanks to Replica, now I don't have to.

— Aman Jha, Co-founder of Explainpaper

Introducing Replica

Replica allows experiment-heavy companies to predict in minutes which A/B tests are worth running in real life.

Powered by thousands of AI browser agents modeled on real user data and finetuned on real user actions, Replica simulates A/B tests by spinning up browser sessions where agents reason, navigate, and complete the target web task across control and treatment variants – mirroring real user interactions.

The result: experiment-heavy companies have the ability to forecast directionally accurate A/B test results in minutes and shortlist which A/B tests are worth running in real life – saving time, traffic, and risk.

Methodology

The Explainpaper team hypothesized that emphasizing how their platform makes papers easier to read, rather than faster to read, would increase new user signups. To test this, they launched a new A/B test via Statsig to compare two website variants – with differences in wording and visuals – across two key funnel stages:

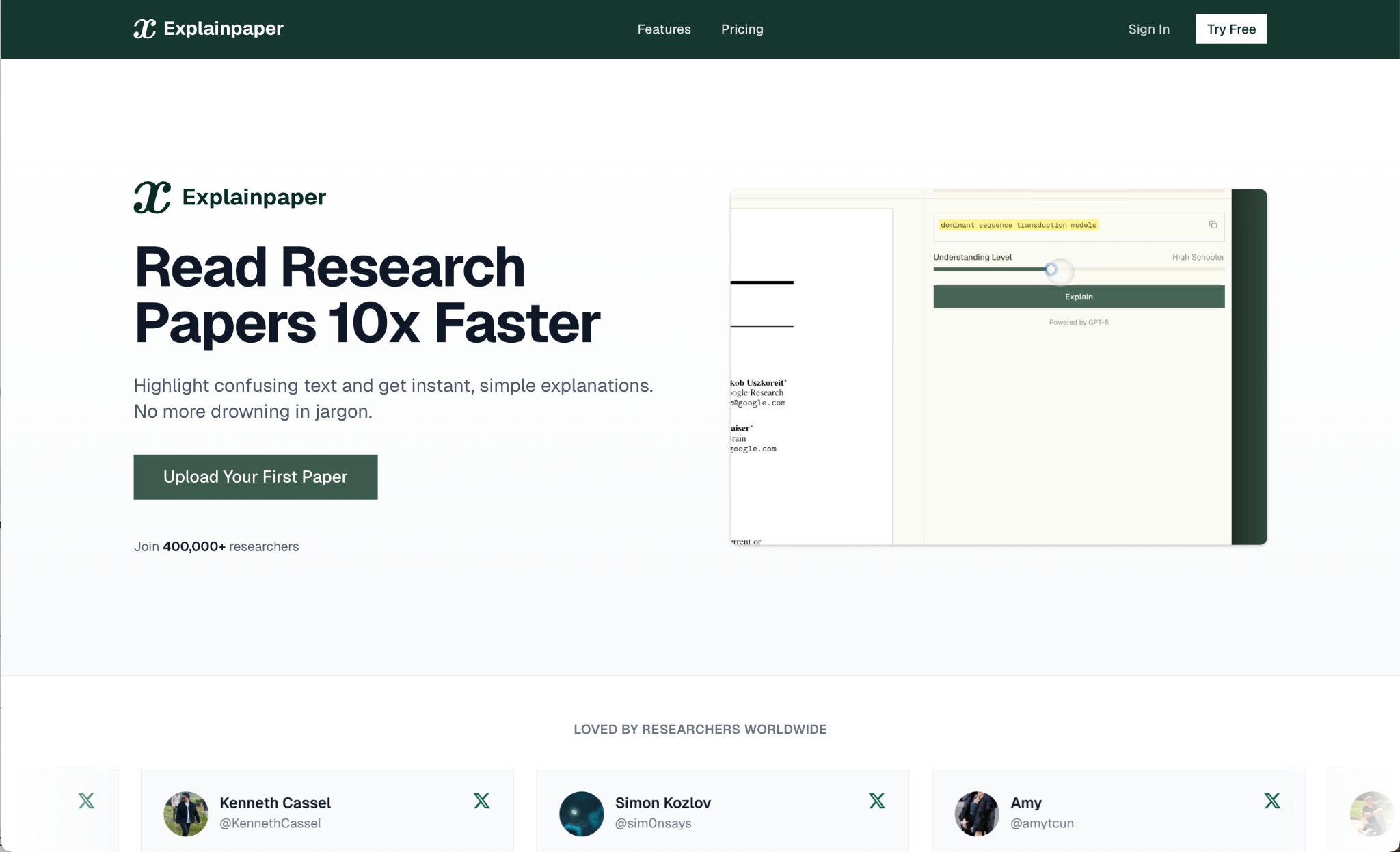

Control Variant: "Read Research Papers 10x Faster"

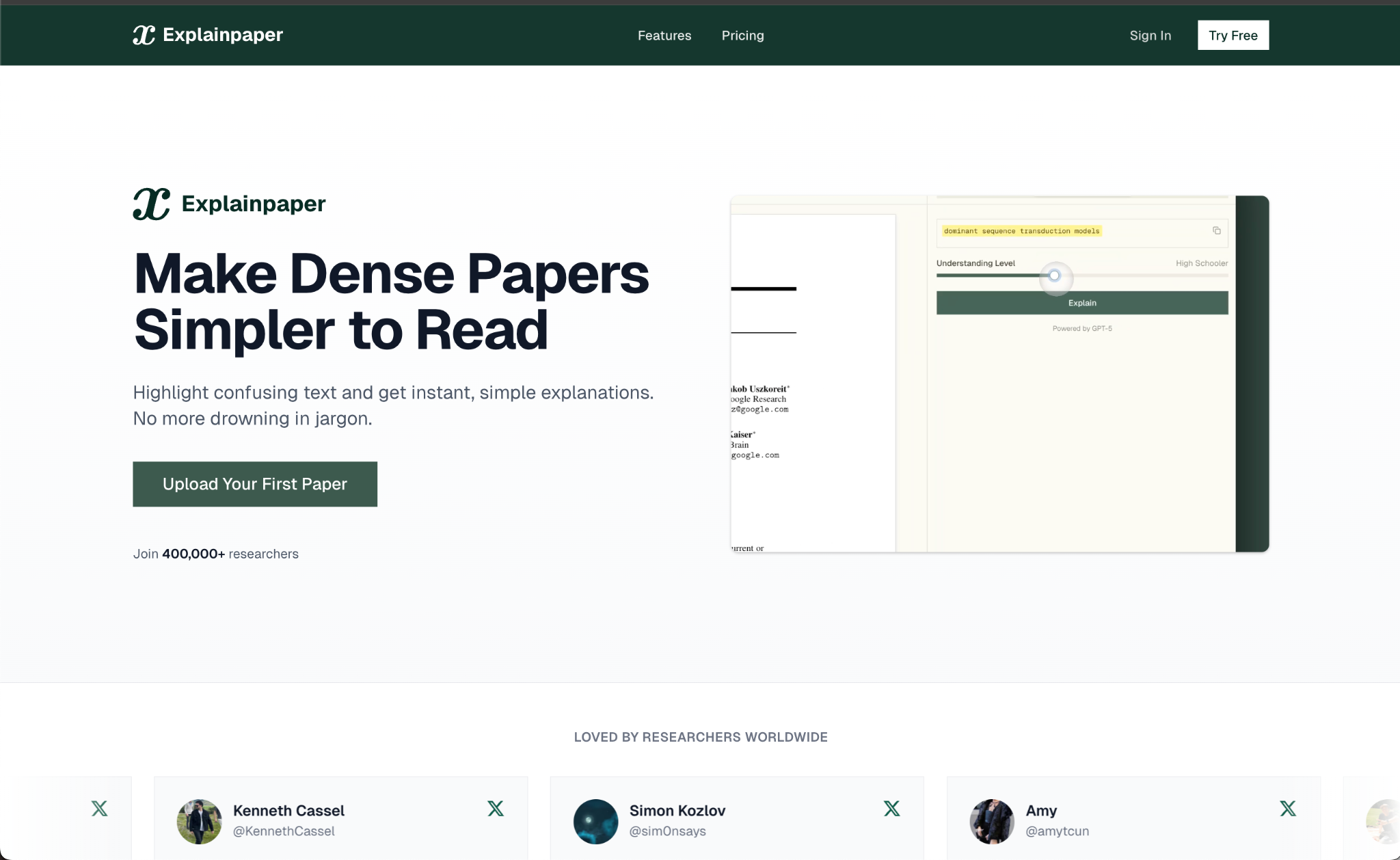

Treatment Variant: "Make Dense Papers Simpler To Read"

Replica's simulated A/B test mirrored the real A/B test exactly – the same control and treatment website variants and same metrics.

In order to maximize the accuracy of the A/B test simulation, Replica's AI agents were modeled on Explainpaper's real user data from Statsig and Supabase – capturing demographic and archetype distributions – and finetuned on real user actions to accurately reflect real user behavior on the Explainpaper website. This ensured that the simulated population resembled Explainpaper's real user base in composition and intent.

Replica ran a thousand browser simulations in just minutes. Each AI browser agent was assigned a unique persona and website variant, then tasked with exploring the site and deciding whether to sign up for an account based on its personality, motivations, and context.

Results

The results tell a clear story: Replica's simulated A/B test achieved directional accuracy for both primary funnel conversion metrics, showing how teams can use simulations to shortlist high potential A/B test candidates to run in real life.

| Metric | Source | Absolute Δ | Relative Δ | Directional Accuracy |

|---|---|---|---|---|

| Conversion Landing → Pricing | Statsig real A/B test | -0.24% | -2.63% | |

| Replica simulated A/B test | -1.52% | -3.49% | ||

| Conversion Pricing → Signup | Statsig real A/B test | -1.92% | -8.14% | |

| Replica simulated A/B test | -6.06% | -7.30% |

Conversion Landing → Pricing

Conversion Pricing → Signup

Beyond directional accuracy, the qualitative layer of Replica's simulations surfaced deeper insights. Session replays and reasoning logs revealed how and why user behaviors differed between variants – visibility that traditional A/B tests can't offer.

Examples of real AI browser agent logs from the simulation:

"Try Free" sounds interesting. Does that mean I can use it without signing up fully? Or is it a free trial *after* signing up? I'm cautious about signing up for things immediately, especially if they might ask for payment later. I'm a student on a tight budget.

- Ethan Clarke, 23, Male, PhD Student, United Kingdom

I'm a bit annoyed. I really wanted to just upload a page or two and see how good the explanations are before giving my email and setting up yet another account. I have so many accounts already.

- Mei Chung, 42, Female, Freelance Research Consultant, Taiwan

"Read Research Papers 10x Faster" is a bold claim, but intriguing. It says "Highlight confusing text and get instant, simple explanations." This sounds exactly like what I need for my consulting work.

- Marcus Bennett, 32, Male, Management Consultant, United States

We expect this ability to audit individual simulations to become a powerful tool for experiment teams conducting post-simulation analyses.

Even when we run AB tests, we have no idea why one performed better. Thanks to the reasoning logs, we have something to go off of, so our next tests are more informed.

— Aman Jha, Co-founder of Explainpaper

Lessons Learned

Replica's simulations were directionally accurate for both primary sign up funnel conversion metrics, demonstrating Replica's ability to forecast A/B test outcomes before they're run live.

This case study validated two core advantages of Replica's simulations – each reinforced by speed and depth of insight:

1. Directional Accuracy

Replica's simulations mirrored the same directional changes seen in Explainpaper's live A/B test, confirming their credibility as an early forecasting tool. The full simulation completed in under an hour, making near real-time experiment prediction possible.

2. Prioritization Power

Alignment with real results showed that Explainpaper can use Replica to identify high-potential A/B tests and skip low-impact ones, saving weeks of time and user traffic. Session replays and agent reasoning logs revealed why behaviors differed between variants – qualitative insight traditional A/B tests can't provide.

As Replica continues to advance its modeling accuracy, we expect results to align even more closely with real A/B tests – reinforcing Replica's role as the first step in every modern experimentation pipeline.

The fact that Replica can accurately predict whether A or B is better as a binary decision means we can test extremely rapidly. We no longer have to wait for data collection.

— Aman Jha, Co-founder of Explainpaper